Documentation Index

Fetch the complete documentation index at: https://docs.langtrace.ai/llms.txt

Use this file to discover all available pages before exploring further.

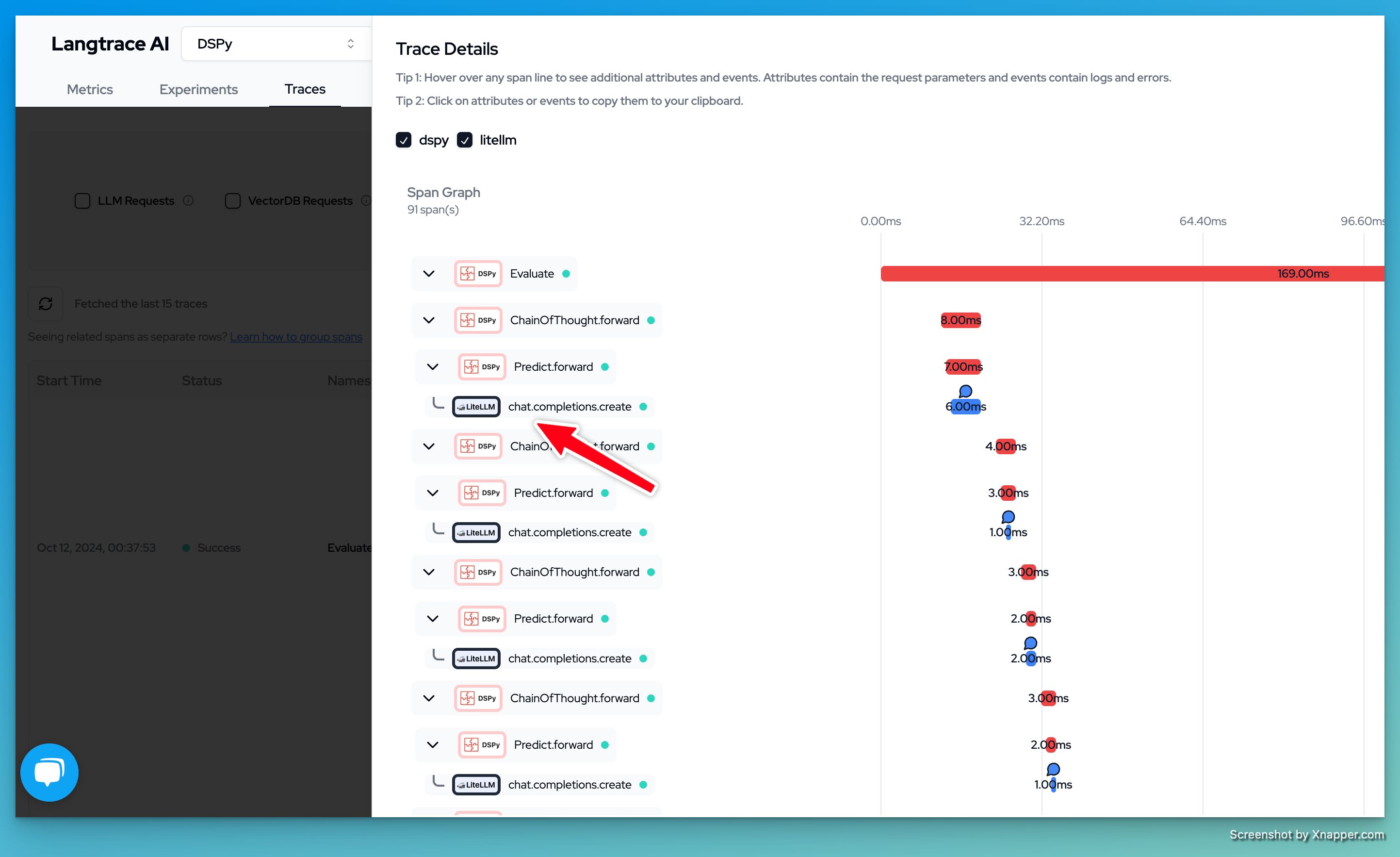

Langtrace integrates directly with LiteLLM, offering detailed, real-time insights into performance metrics such as cost, token usage, accuracy, and latency.

You’ll need API key from Langtrace. Sign up for

Langtrace if you haven’t done so already.*

LiteLLM SDK

- Setup environment variables:

export LANGTRACE_API_KEY=YOUR_LANGTRACE_API_KEY

- Add callback to your LiteLLM client

import litellm

litellm.success_callback = ['langtrace']

- Use LiteLLM completion

from litellm import completion

response = completion(

model="gpt-4",

messages=[

{"role": "user", "content": "this is a test request, write a short poem"}

],

)

print(response)

LiteLLM Proxy

- Create

config.yaml:

model_list:

- model_name: gpt-4

litellm_params:

model: openai/gpt-4

litellm_settings:

callbacks: ["langtrace"]

environment_variables:

LANGTRACE_API_KEY: <YOUR_LANGTRACE_API_KEY>

- Run LiteLLM Proxy

litellm --config config.yaml --detailed_debug

- Test your setup

curl --location 'http://0.0.0.0:4000/chat/completions' \

--header 'Content-Type: application/json' \

--data ' {

"model": "gpt-4",

"messages": [

{

"role": "user",

"content": "what llm are you"

}

]

}'

Want to see more supported methods? Checkout the sample code in the Langtrace Langchain Python Example

Want to see more supported methods? Checkout the sample code in the Langtrace Langchain Python Example